WHAT THE RESEARCH IS:

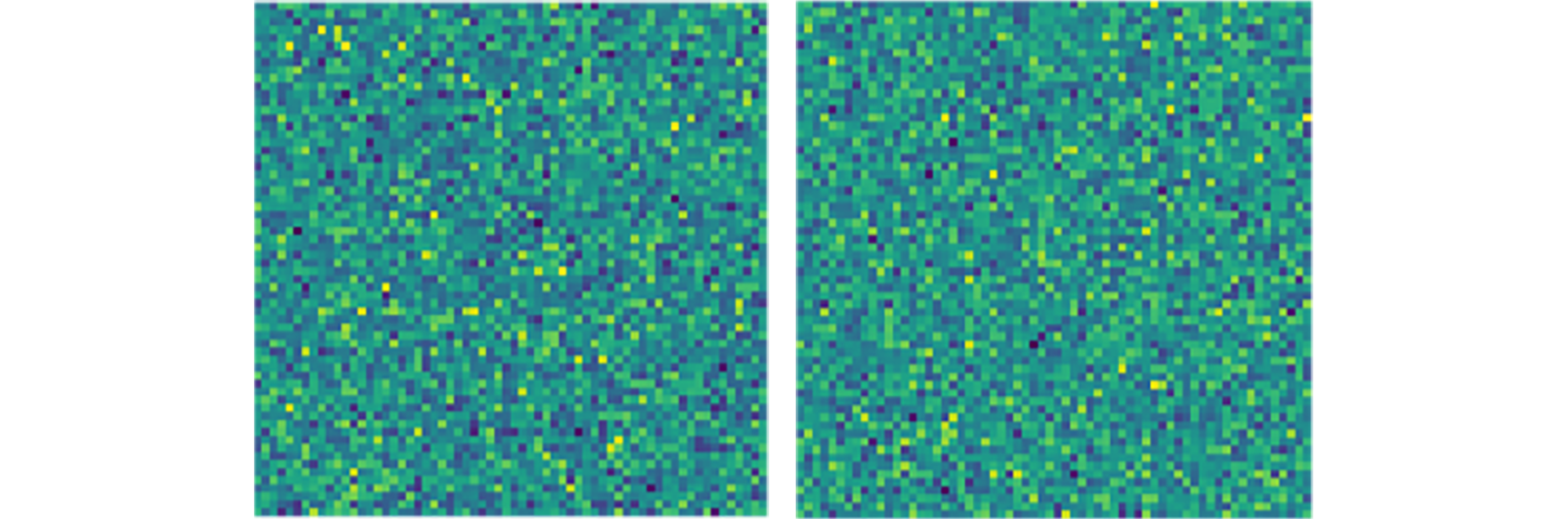

A study of language learning in which AI agents learn to communicate about images by exchanging symbols. The surprising finding is that the agents aren’t developing an understanding of the relationship between images and words. Contrary to previous findings, the agents are relying only on low-level similarities between the images’ features and have no conceptual grasp of what the different images represent (e.g., whether an image shows a cat or a couch).

HOW IT WORKS:

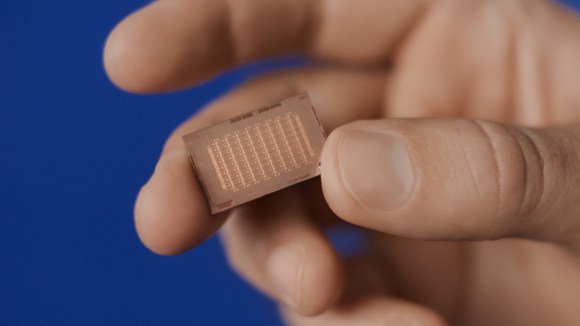

Our researchers trained agents on the same games used in previous research, in which a pair of agents communicate about images using a fixed-size vocabulary. Unlike in those previous studies, which suggested that the agents developed a shared understanding of what the images represented, our researchers found that they extracted no concept-level information. The paired agents could arrive at an image-based consensus based solely on low-level feature similarities, without determining, for example, that pictures of a Boston terrier and a Chihuahua both represent dogs. In fact, the agents were able to reach a consensus even when presented with similar patterns of visual noise, which included no recognizable objects.

WHY IT MATTERS:

Fine-tuning experimental methodologies is important for the long-term goal of creating systems that develop more natural language-based communication. This work improves our understanding of the visual semantics agents use, which allows us to design future setups in which agents have stronger reasons to develop more natural communication strategies.

READ THE FULL PAPER:

How agents see things: On visual representations in an emergent language game